MLOps unlocks the key to AI

Currently, there are many machine learning models that do not make it from the test phase to production, which poses a challenge for companies in the Machine Learning field. Many companies are organised in silos and this sometimes leads to complexities in the areas of model creation, management and implementation.

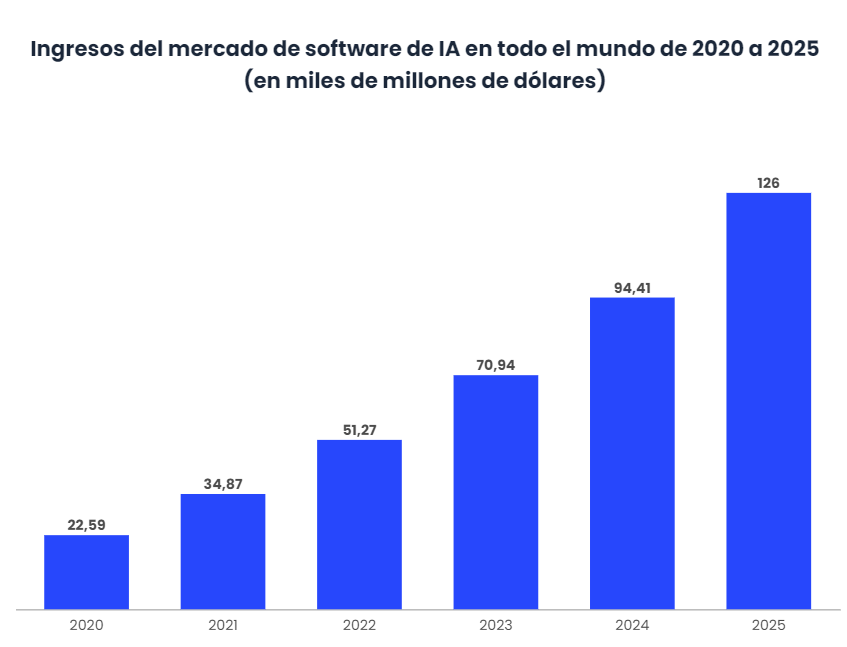

It is estimated that there is a business opportunity of 1.2 billion dollars, and that 85% of companies currently use AI. In some European countries, such as Spain, 45% of economic gains in 2030 will come from the commercial application of AI solutions. [1]

From this starting point, the Machine Learning Model Operationalization Management practice, MLOps, has emerged to provide companies with the right mix of data science, data engineering and DataOps expertise to effectively deploy and scale machine learning to generate high business value. MLOps provides a Machine Learning E2E development process for designing, building and managing reproducible ML-driven software.

Among the most fundamental features of MLOps and how it differs from traditional software engineering are that:

- It aims to unify the release process for machine learning and new software applications.

- Facilitates automated testing such as data validation, ML model testing or model integration testing.

- It involves the introduction of agile principles in ML projects.

- Supports models and datasets for the construction of new models within IC/DC systems.

- Reduces technical difficulties in modelling.

- MLOps is a language, framework, platform or infrastructure independent practice.

The start of AI development involves many manual, complex and siloed processes across a company’s teams. As a result, production speed for model development or retraining is lost and it is difficult to launch new iterations. Within this scope, continuous integration and delivery are often not taken into account and models are rarely monitored, so that performance degradation or behavioural deviations are not known.

Therefore, there is a limitation to the potential value of AI, the more models are developed and implemented, the greater the challenge. Without the MLOps approach, teams can be mired in failures that take time away from the continuous innovation aspect.

MLOps creates a framework for orchestration and automation of the ML pipeline, enabling rapid development and experimentation with models through continuous training. The continuous integration involved is then supported by automated testing and modularised pipeline components. Furthermore, collaboration and alignment between development and production environments simplifies handoffs, supporting continuous delivery. All this with continuous monitoring that provides performance feedback based on live data. Models can therefore be retrained and optimised over time.

So, MLOps is defined as DevOps for machine learning projects. This is a methodology that facilitates the implementation of ML models, focusing on tools and methods to improve process performance.

Initiation of MLOps

Companies are increasing their AI projects in production every day, so an MLOps-backed approach will be necessary for them. Therefore, companies need to identify and adopt the necessary tools to satisfy their processes, giving them value and making themselves more competitive.

For organisations that have not yet implemented AI processes, considering the use of MLOps must come from the very beginning of the process. The existence of a pre-emptive strategy is essential for the work to start delivering value. Setting aside a period of time to plan how to optimise MLOps will facilitate continuous integration and delivery. The first step is to involve all teams involved in the process, i.e. data scientists and engineers, infrastructure and DevOps teams, software developers, business analysts, architects and IT leaders, so that they can all investigate and develop a comprehensive strategy.

Each company will make its own decisions throughout the AI lifecycle, and the programming and organisation will vary according to the strategy defined by each company. However, there are some process flows that are used as a starting point for identifying solutions. There are five initial stages in this process:

- Define objectives and key results with KPIs, that is, understand the KPIs of each business as this is the first step in the process. This is a non-technical phase, but fundamental for the collaboration between data stewards and system experts, and relevant data for ML model implementation processes.

- Data acquisition requires data scientists to collaborate with data engineers to discover key information for the development of machine learning. Information needs to be integrated into data lakes in the cloud, with data quality rules applied to the data and made available for modelling. For example, Informatica Enterprise Data Catalog (EDC), Cloud Mass Ingestion (CMI), Data Engineering Integration (DEI), Data Engineering Quality (DEQ), Data Engineering Streaming (DES) and Enterprise Data Preparation (EDP) ensure that data pipelines are processed.

- Model development is at the heart of the MLOps framework. Once KPIs are defined and datasets are remediated, data scientists can begin the model development experience in an iterative manner until expectations are met. Part of the iterations may involve collaboration with other departments such as data engineers or data stewards. ML development tools and processes integrate with data engineering to support this stage.

- Next comes model implementation. With data pipelines established from the early stages, it’s time for the data engineer to integrate the ML model developed by the data scientist and validate it with production data. All of this further validates the KPIs, and the data pipeline is then deployed into production with the DataOps teams for ongoing use and monitoring. The integration aspect of data engineering helps to implement the ML model into the production process.

- Finally, the phase of monitoring a model, measuring its metrics and supervising its behaviour is important. These functions can be taken care of by the DataOps team to ensure continuous value and increase the trust placed in the ML. In such a way, processes can be established throughout the life of the software development. Data engineering can be used to track business metrics and profiles.

Conclusions

An opportunity to evaluate the creation of machine learning models and processes requires building an MLOps strategy, even if it is still at an early stage of development. Also, there are platforms that can help with the management of the machine learning lifecycle. Therefore, it is vitally important for any company that wants to introduce AI to prioritise the desired functions and capabilities with its existing ecosystem and define the company’s blueprint.

Although there are pre-determined paths to implementing MLOps, there are different methodologies for operationalising AI. Initially, for the company to be successful it must focus on the upfront aspects of the implementation and the overlaps that exist in relation to the machine learning operations-focused layer of the enterprise. MLOps addresses the technical side of this situation, helping to achieve success and deliver value, regardless of the size of the company.